Teachers are drowning in marking. A single class assignment can mean hours of grading, and that's before writing individualized feedback for every student. AI could help, but every existing tool felt like it was trying to replace the teacher rather than support them.

Educator feedback @ education conference

The brief arrived as an idea, not a product. The founder — a teacher themselves — had a clear vision of the problem but needed help translating it into something buildable. We started from scratch together: mapping features, defining users, writing stories, and establishing scope before a single screen was designed.

Having a founder who was also a subject matter expert shaped everything. They weren't just approving decisions, they were feeding me the domain knowledge to make them. Teacher insights came through consistent email notes, classroom observations, and feedback from their educator network.

Investors would occasionally join our calls to review progress. That added a second audience to every design decision. The product had to work for teachers and make sense to people who had never set foot in a modern classroom.

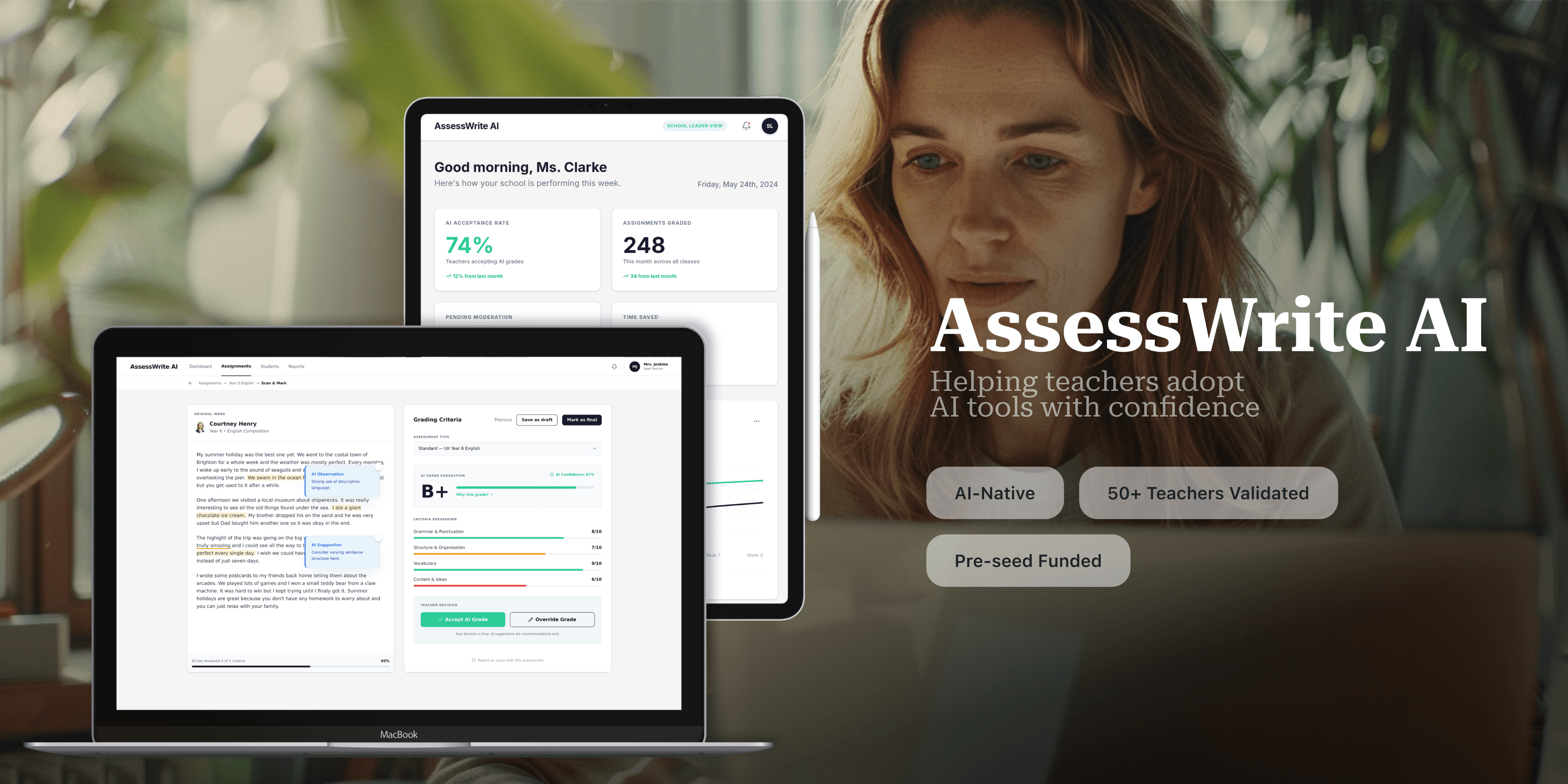

Good products rarely arrive fully formed. AssessWrite AI went through three distinct iterations before reaching the version that validated with teachers and secured funding.

Iteration 1: Too much, too soon

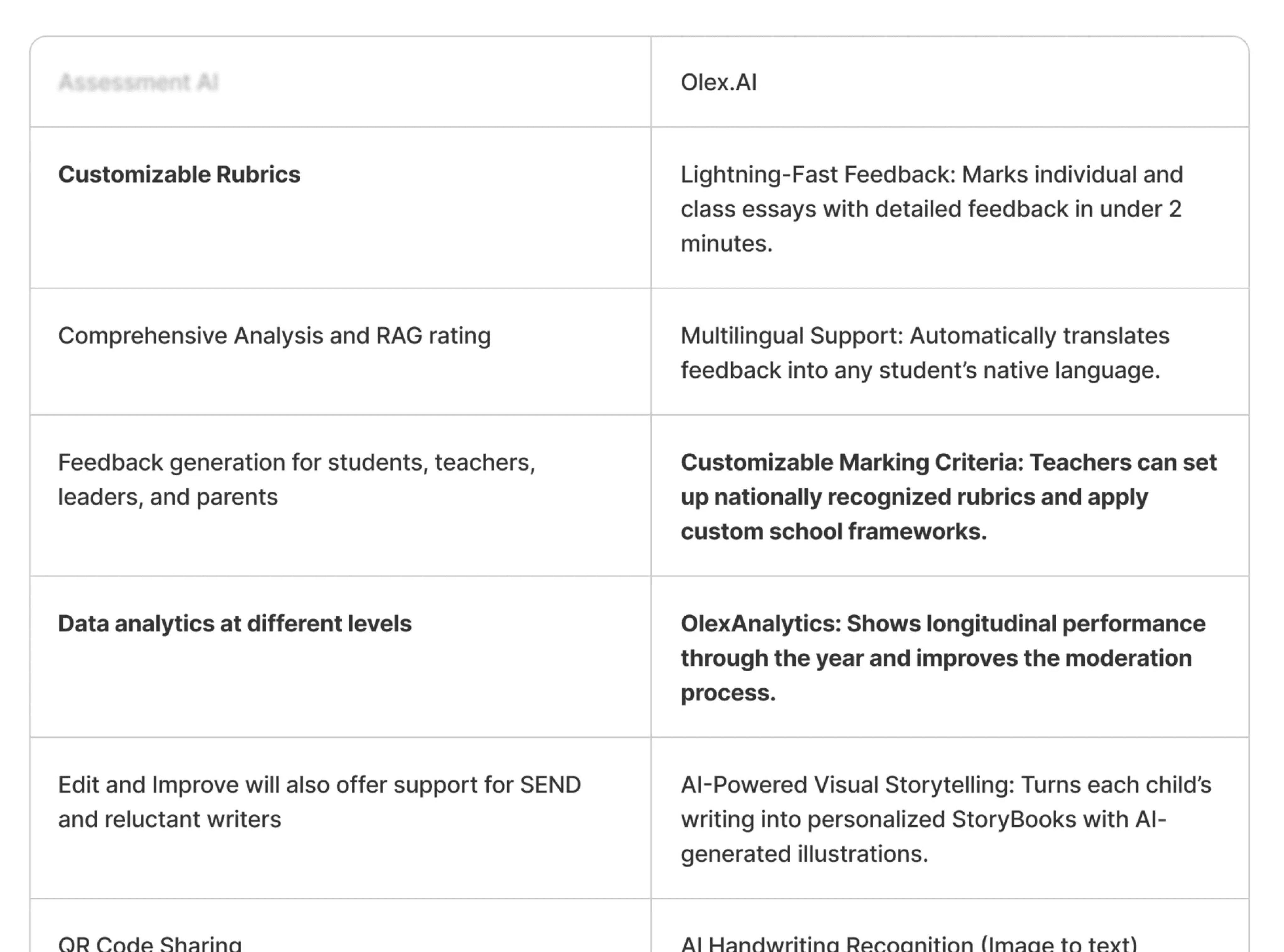

The first version reflected the founder's full vision: four user types, three separate platforms, and a feature set that covered every possible scenario. The design was sound, but the scope was impossible to pitch and impossible to build in a reasonable timeframe. When we reviewed it together, we agreed to focus on two user types.

Iteration 2: Finding the core

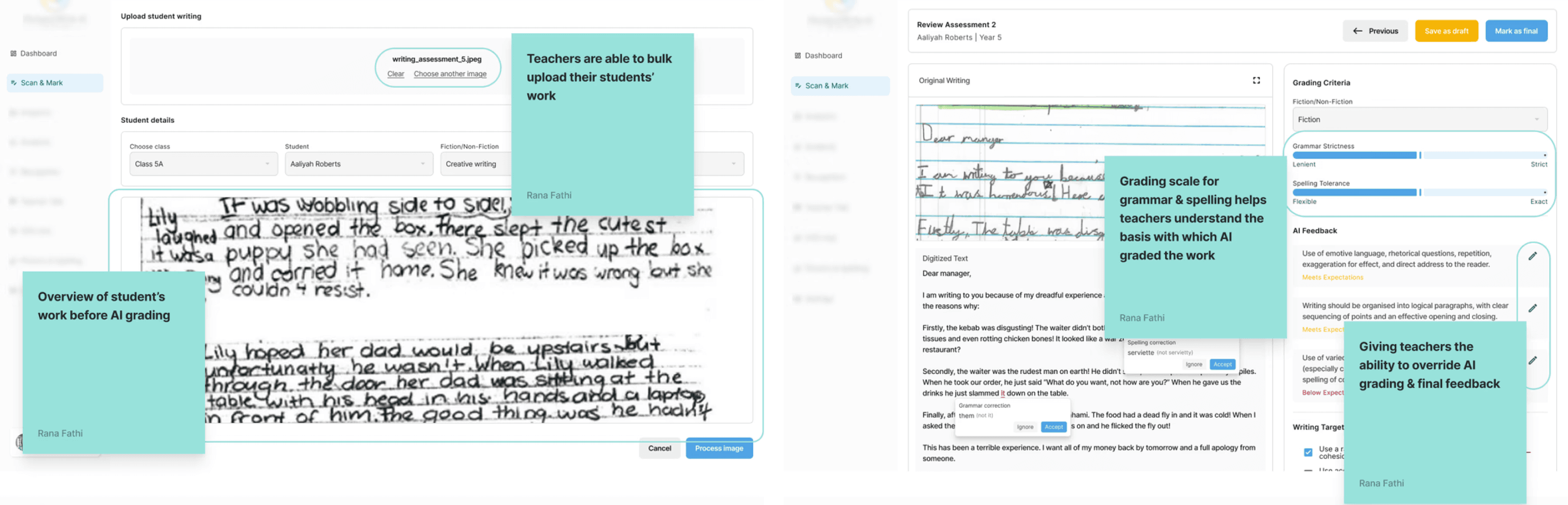

We cut to two user types — teachers and school leaders — and one platform. Everything that didn't directly support the core grading workflow was deferred to a future version. The interface became cleaner, the navigation simpler, and the value proposition sharper.

Iteration 3: Teacher validated

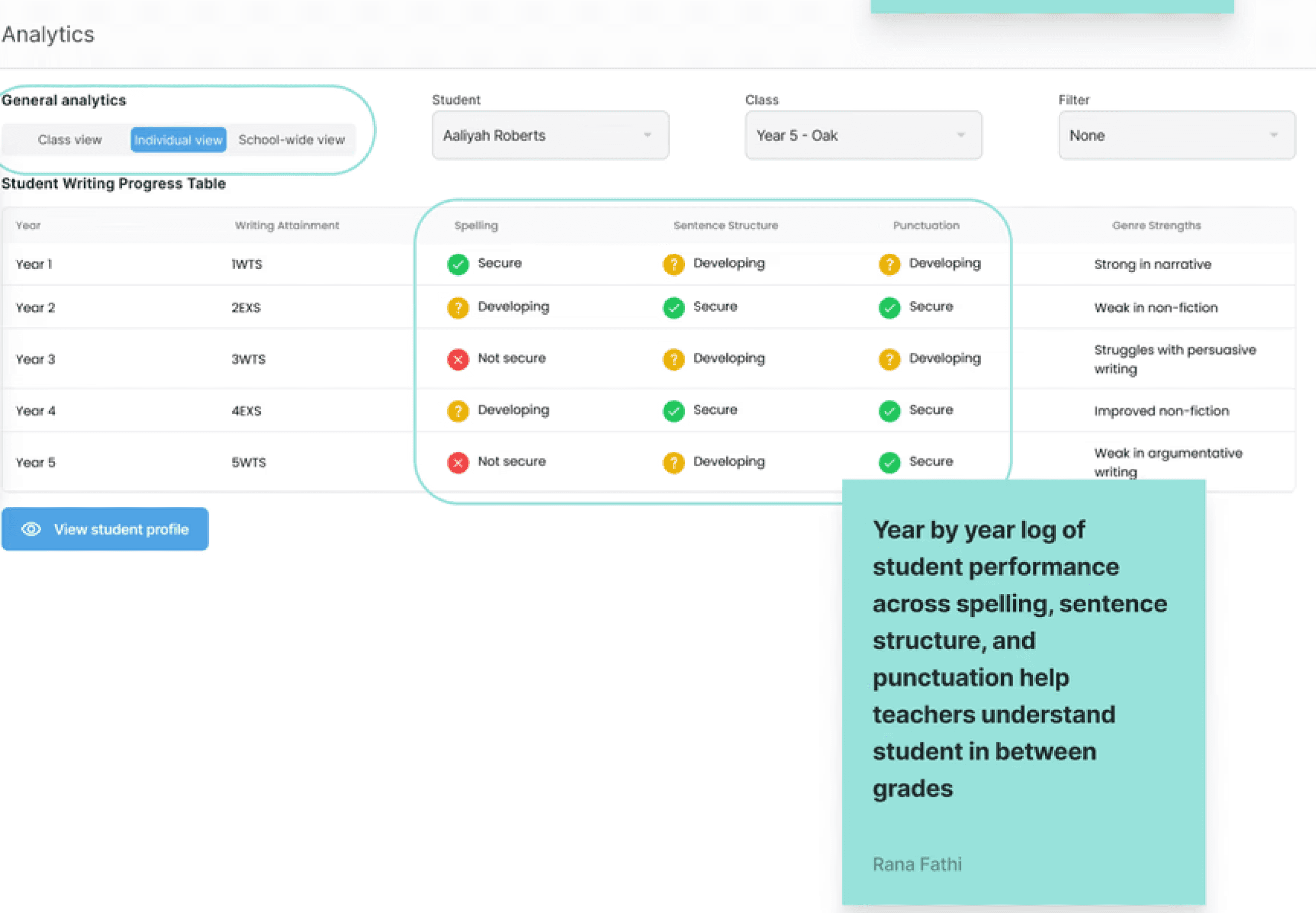

After the founder presented the prototype to 50+ teachers at an education conference, clear patterns emerged in the feedback. Teachers wanted to understand why AI made grading decisions, not just what it decided. They wanted more control, more transparency, and faster ways to handle routine work. The third iteration addressed all of it, and became the version that secured pre-seed funding commitment.

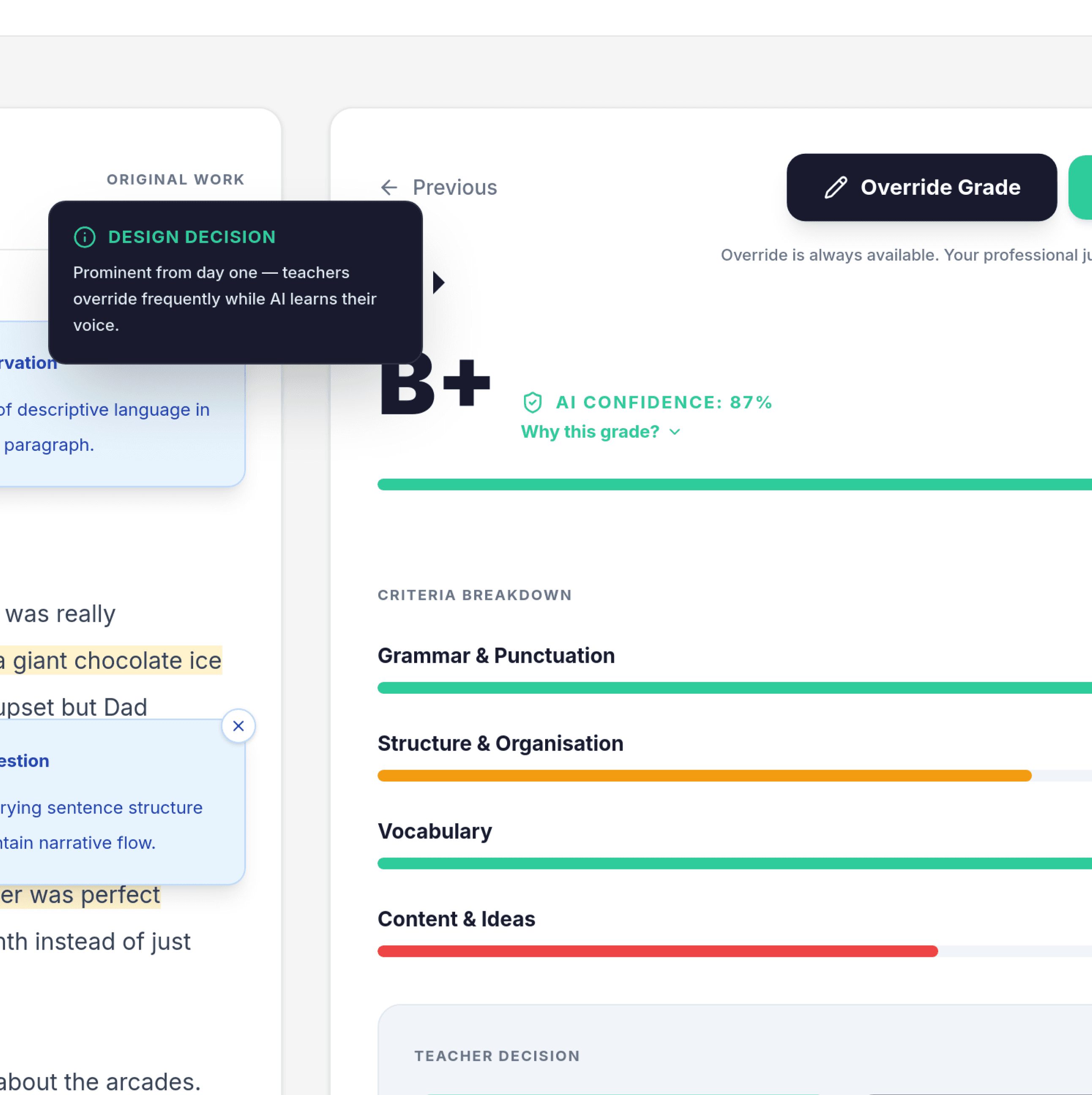

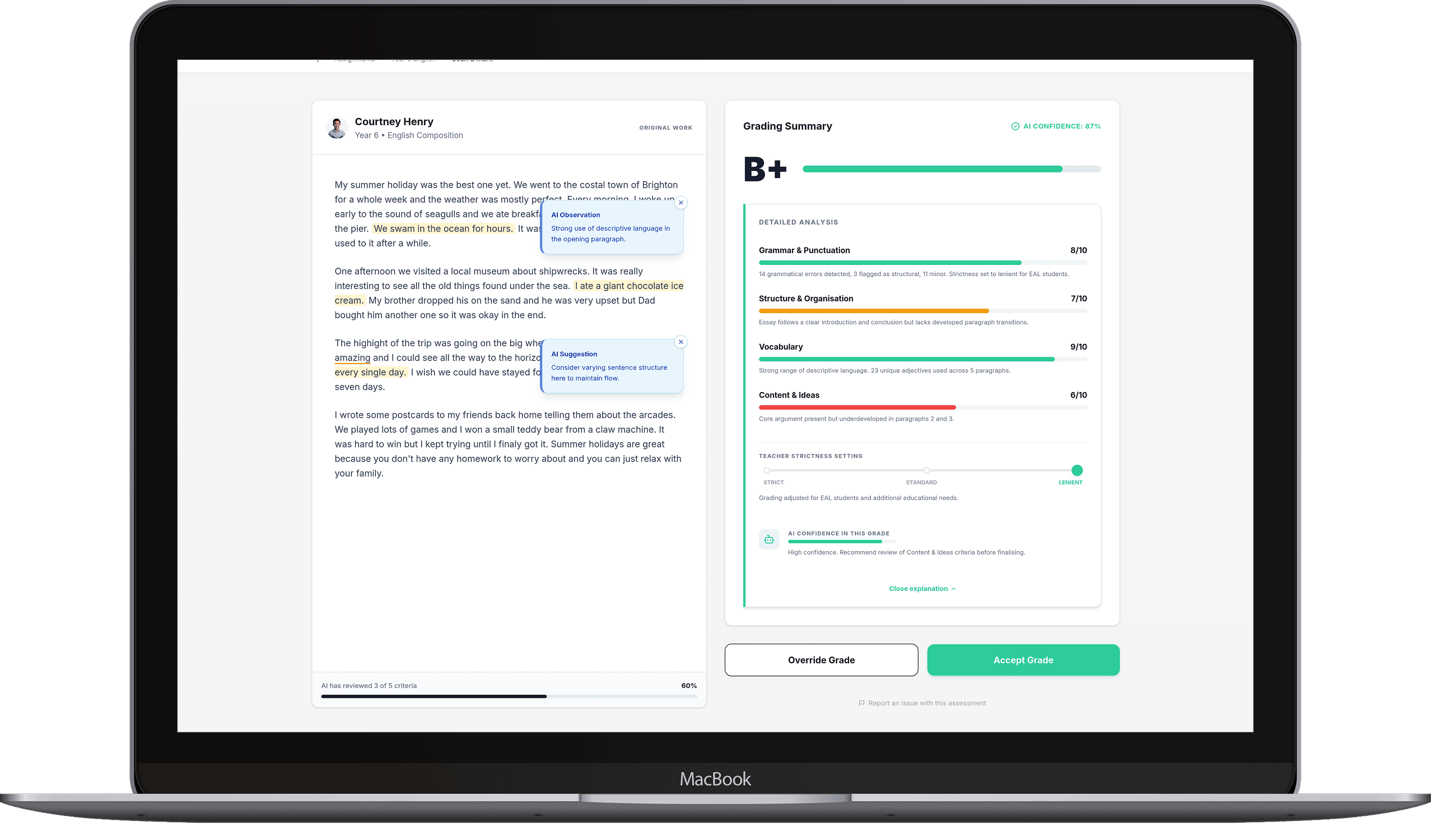

1. Building trust through transparency

I designed a "Why this grade?" feature that surfaced the AI's full reasoning: sentence structure patterns, grammar frequency, vocabulary range, and how each was weighted against the rubric. Crucially, this was cross-referenced with a teacher-set strictness scale — accounting for students with different mother tongues, cultural backgrounds, or additional educational needs. A student who understands the content but communicates it differently shouldn't be penalised by a one-size-fits-all rubric.

The AI also graded its own output, giving teachers a confidence score on every assessment so they knew when to trust it and when to look closer. This wasn't a feature the founder requested, rather it was the natural way I thought about teacher-led grading with AI assistance.

2. Maintaining teacher authority

Every screen in AssessWrite AI reinforced one principle: the teacher has final say.

The override button was placed on every output screen in the brand's primary colour, not buried in a menu. In the early version of the product, before the AI had been trained to a teacher's individual voice and marking style, overrides were going to happen frequently. This was especially relevant to where the product was in its lifecycle.

The founder, the business team, and the investors all agreed. A teacher who felt in control was more likely to adopt the tool, generate training data, and help the AI improve over time. The override button could be reworked to be less prominent once the AI had proven itself. For version one, teacher confidence came first.

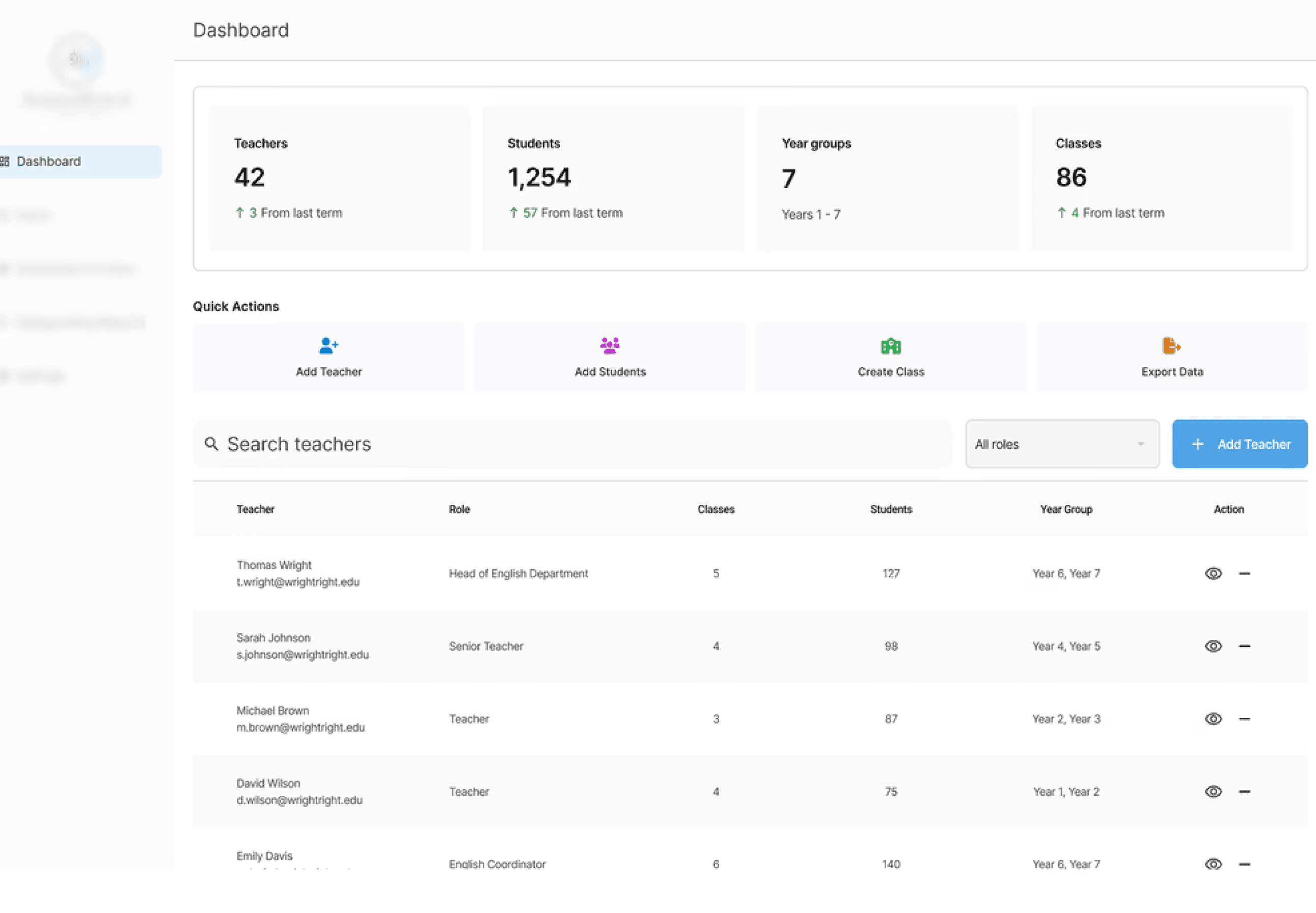

3. Designing for two stakeholders at once

Every design decision in this project had two audiences: the teacher who would use it daily, and the investor who needed to believe it would work.

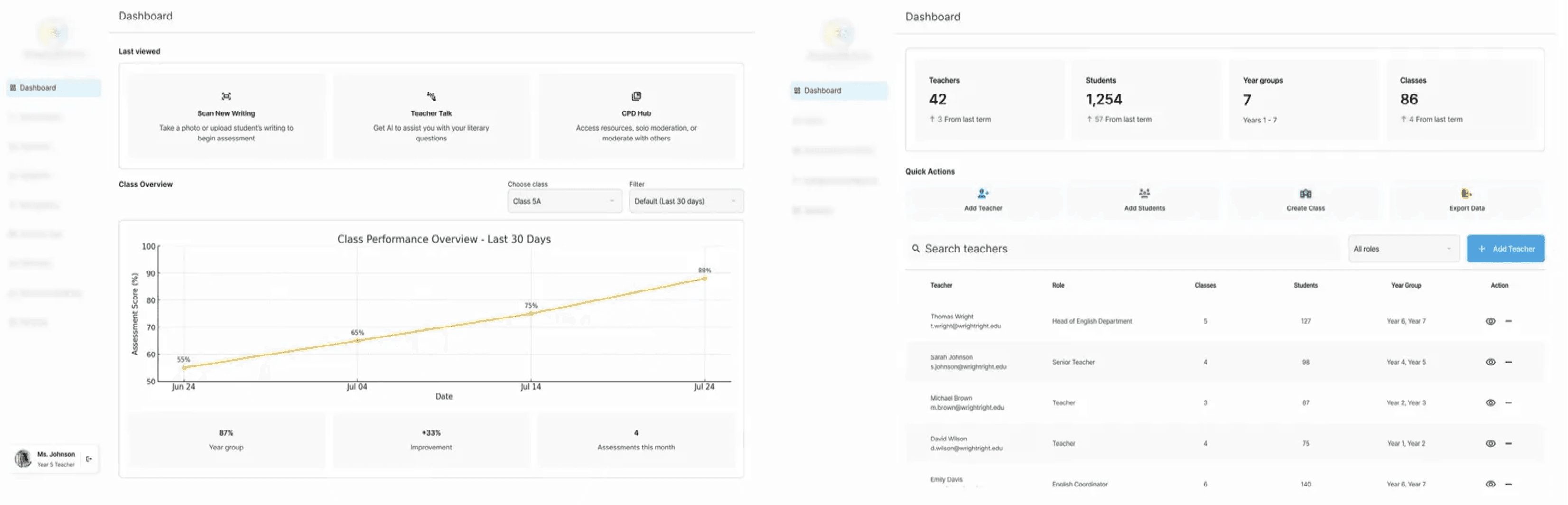

Those goals were mostly aligned, as a product teachers trusted was a product worth funding. But there were moments where they diverged. Data visualisation was one of them. Developers can implement charts from libraries quickly, but library-ready charts don't make investors lean forward in their seats.

I designed the performance dashboards and analytics views to be visually compelling, as investors would be in the room when they were presented. The principle I kept coming back to: if it works for the teacher, the investor will follow. But if it only impresses the investor, the teacher will walk away.

AssessWrite AI was presented to 50+ teachers at a national education conference as the first real-world validation of the product outside the founder's own classroom. Teachers engaged positively with the prototype, with many expressing interest in participating in beta testing when the platform launched.

The design work directly contributed to securing pre-seed funding commitment from 2 partners. The prototype demonstrated both technical feasibility and real teacher validation, de-risking the investment at the earliest and most uncertain stage of the product's life.

The platform is currently awaiting final funding completion to proceed to full development.

Founder, AssessWrite AI

50+

Teachers validated

2

Funding partners

This was the most stakeholder-complex project I've worked on. The founder was simultaneously the client, the subject matter expert, and a proxy for the end user. Getting that balance right, knowing when to defer to their domain knowledge and when to push back on scope, was the real design challenge underneath all the UI decisions.

If I could go back, I'd involve real teachers earlier. The founder was an excellent proxy, but the conference feedback in Iteration 3 revealed assumptions I'd been carrying since Iteration 1. Earlier access to even three or four teachers would have surfaced those gaps sooner.

What I'm most proud of is that a room full of teachers left that conference wanting to be part of what came next. That's the outcome that matters most.